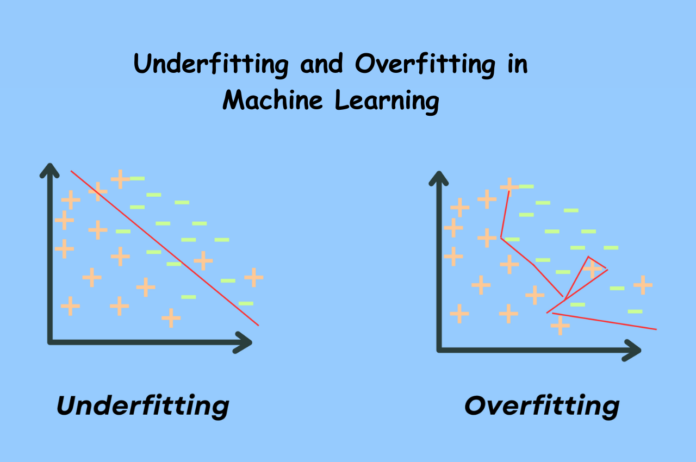

Underfitting and Overfitting are common issues in machine learning that can hinder model performance. Understanding the reasons behind these problems and taking steps to address them can greatly improve the overall performance of your model. Let’s delve deeper into the distinction between Underfitting and Overfitting using a hypothetical scenario.

Thank you for reading this post, don't forget to subscribe!Let’s consider a scenario where two children are preparing for a math exam. One child has only learned addition due to time constraints, while the other child has memorized all the problems in the math book but lacks a deep understanding of math concepts. During the exam, the first child struggles with problems involving subtraction, multiplication, and division, while the second child can only solve problems from the memorized book. If the exam includes a variety of math questions beyond their preparation, both children would likely fail.

Machine learning algorithms can exhibit behavior similar to these children. Some algorithms may underfit by learning only a small portion of the training data, while others may overfit by memorizing the entire dataset and struggling with unseen data. Understanding the concepts of Underfitting and Overfitting is crucial in improving model performance.

This article will discuss the importance of generalization, bias-variance tradeoffs, and their relationship to Underfitting and Overfitting in machine learning. We will also explore methods to detect and prevent these issues for optimal model performance.

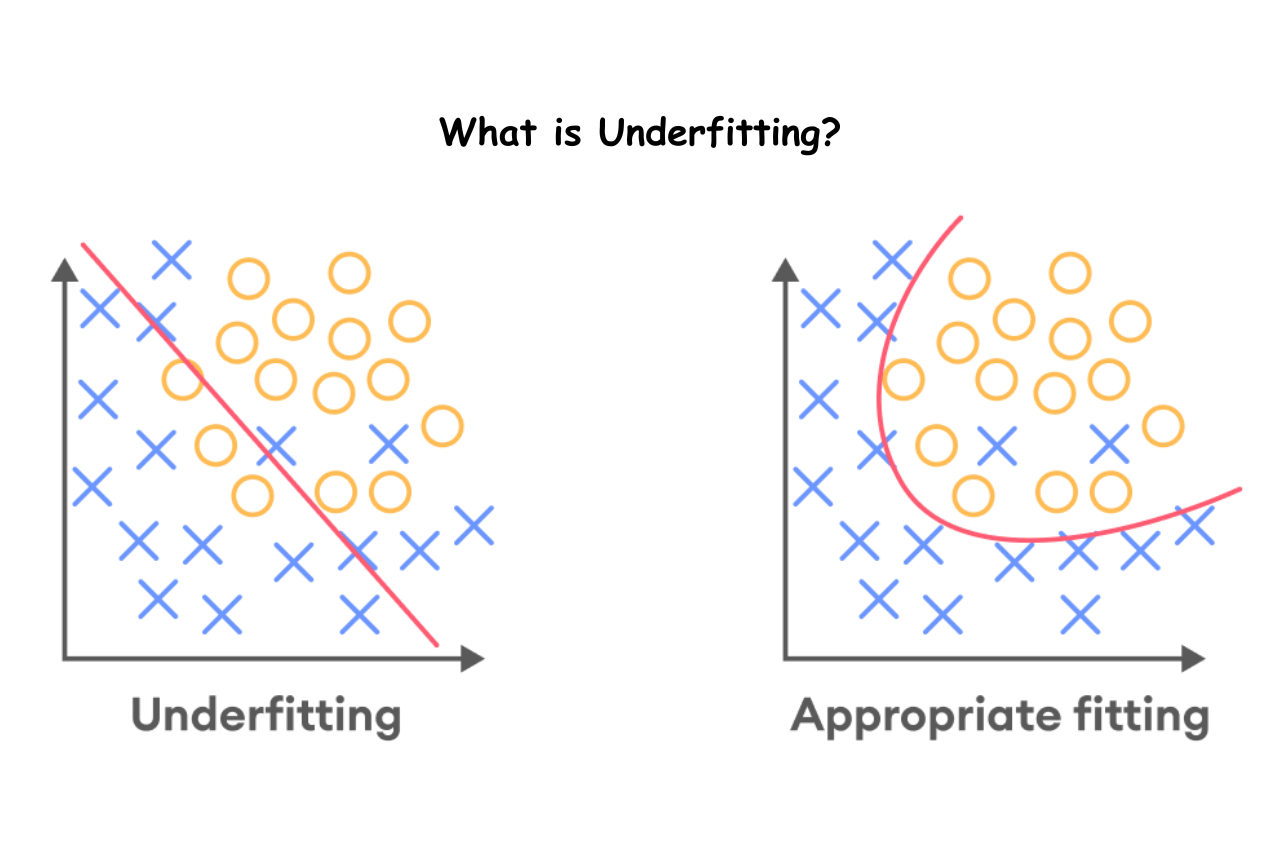

What is Underfitting?

Underfitting refers to the situation when a model fails to effectively learn the patterns present in the training data, leading to inadequate generalization on new data. Consequently, an underfit model exhibits subpar performance on the training data and produces unreliable predictions. This phenomenon arises from a combination of high bias and low variance.

Reasons for Underfitting

- The training data is unclean and contains noise.

- The model exhibits significant bias.

- Insufficient training dataset size is utilized.

- The model is overly simplistic.

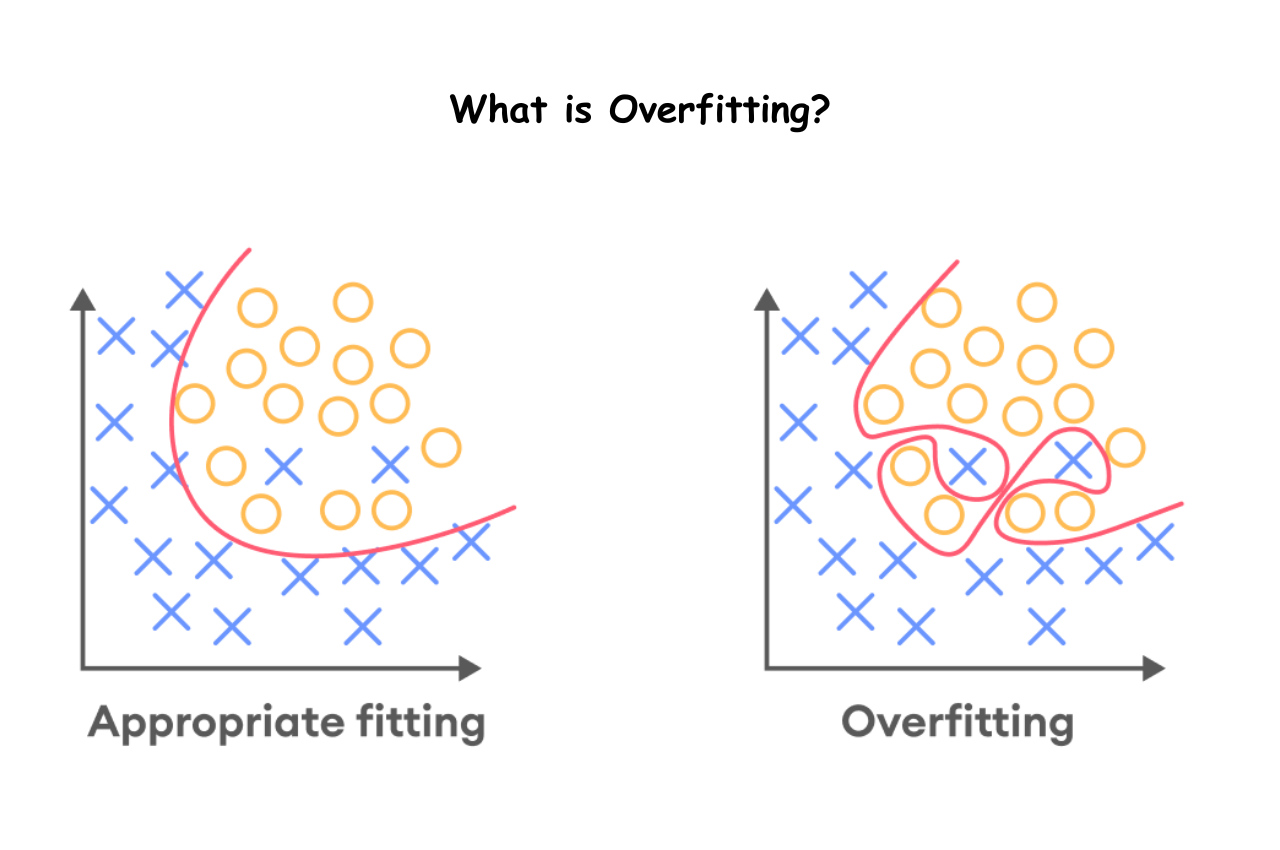

What is Overfitting?

Overfitting occurs when a model demonstrates excellent performance on training data but fails to perform well on test data (new data). In such instances, the machine learning model becomes overly focused on the intricacies and noise present in the training data, leading to a detrimental impact on its performance when applied to test data. Overfitting can arise as a result of low bias and high variance.

Reasons for Overfitting

- The training data is uncleaned and includes noise.

- The model exhibits significant variance.

- Insufficient training dataset size is utilized.

- The complexity of the model is excessive.

Underfitting in Machine Learning

An underfitting model occurs when a statistical model or machine learning algorithm is too simplistic to accurately capture the complexities within the data. This leads to poor performance on both training and testing data, resulting in inaccurate predictions when applied to new, unseen examples. To combat underfitting, it is essential to utilize more complex models with improved feature representation and reduced regularization.

Overfitting in Machine Learning

Overfitting occurs when a statistical model fails to accurately predict outcomes on testing data due to being trained on an excessive amount of data, leading to learning from noise and inaccurate entries. This results in high variance during testing, causing the model to struggle with categorizing data accurately due to an overload of details and noise. Non-parametric and non-linear methods are common culprits of overfitting, as they have more flexibility in constructing models based on the dataset, potentially creating unrealistic models. To prevent overfitting, it is advisable to use linear algorithms for linear data or adjust parameters such as maximal depth when utilizing decision trees.

Overfitting is a challenge where machine learning algorithms perform well on training data but struggle with unseen data during evaluation.

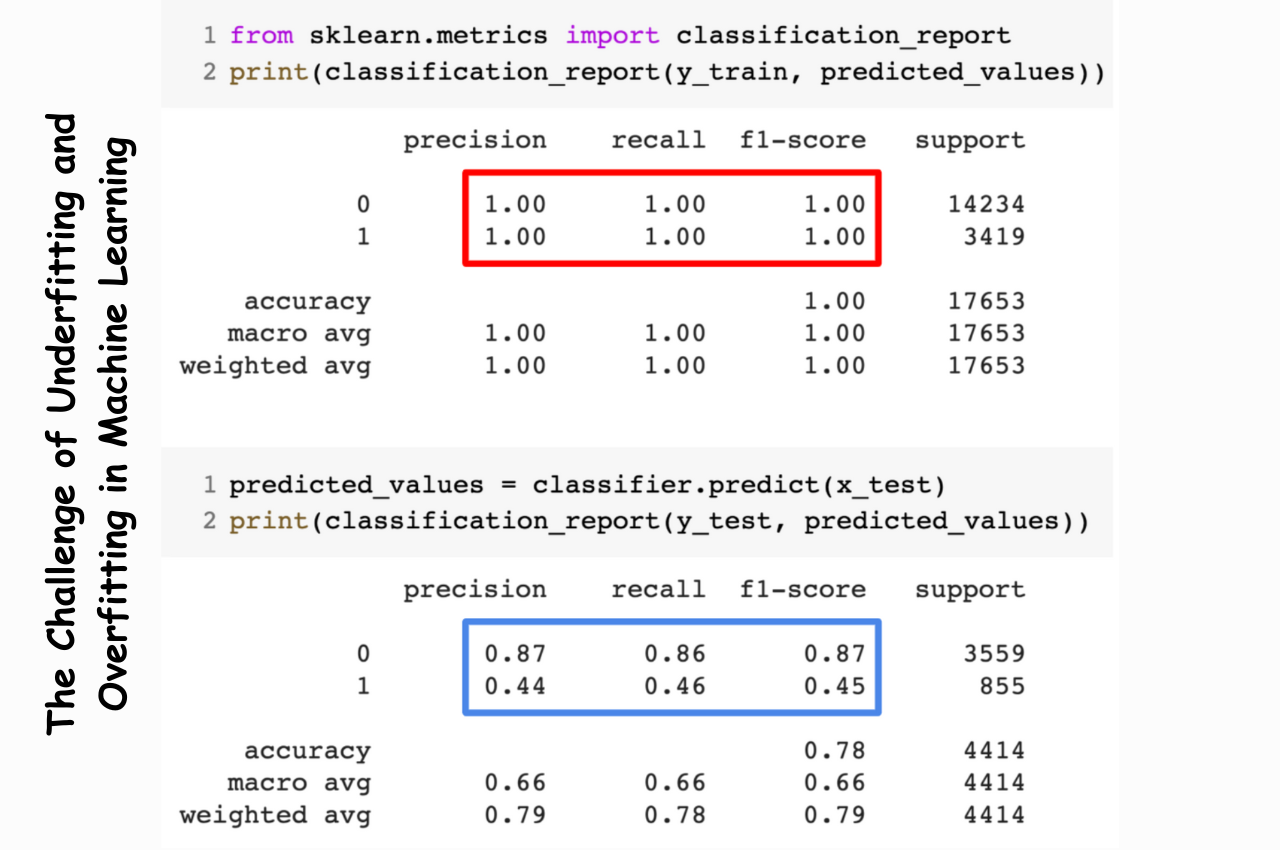

The Challenge of Underfitting and Overfitting in Machine Learning

This question is bound to come up during a data scientist interview.

Can you provide a simple explanation of underfitting and overfitting in the context of machine learning? Describe it in a way that even someone without technical knowledge can understand.

Your ability to explain this in a non-technical and easily comprehensible manner could greatly influence your suitability for the data science role!

Even when we’re working on a machine learning project, we often encounter situations where there are unexpected differences in performance or error rates between the training set and the test set (as shown below). How can a model perform exceptionally well on the training set but poorly on the test set? This occurs quite frequently when I work with tree-based predictive models. Due to the nature of the algorithms, it becomes quite challenging to avoid falling into the overfitting trap!

This occurs quite frequently when I work with tree-based predictive models. Due to the nature of the algorithms, it becomes quite challenging to avoid falling into the overfitting trap!

Furthermore, it can be quite overwhelming when we are unable to identify the underlying reason behind the unusual behavior of our predictive model.

Based on my personal experience, if you ask any experienced data scientist about this, they usually start discussing a range of complex terms like Overfitting, Underfitting, Bias, and Variance. However, very few people delve into the intuition behind these machine learning concepts. Let’s address that, shall we?

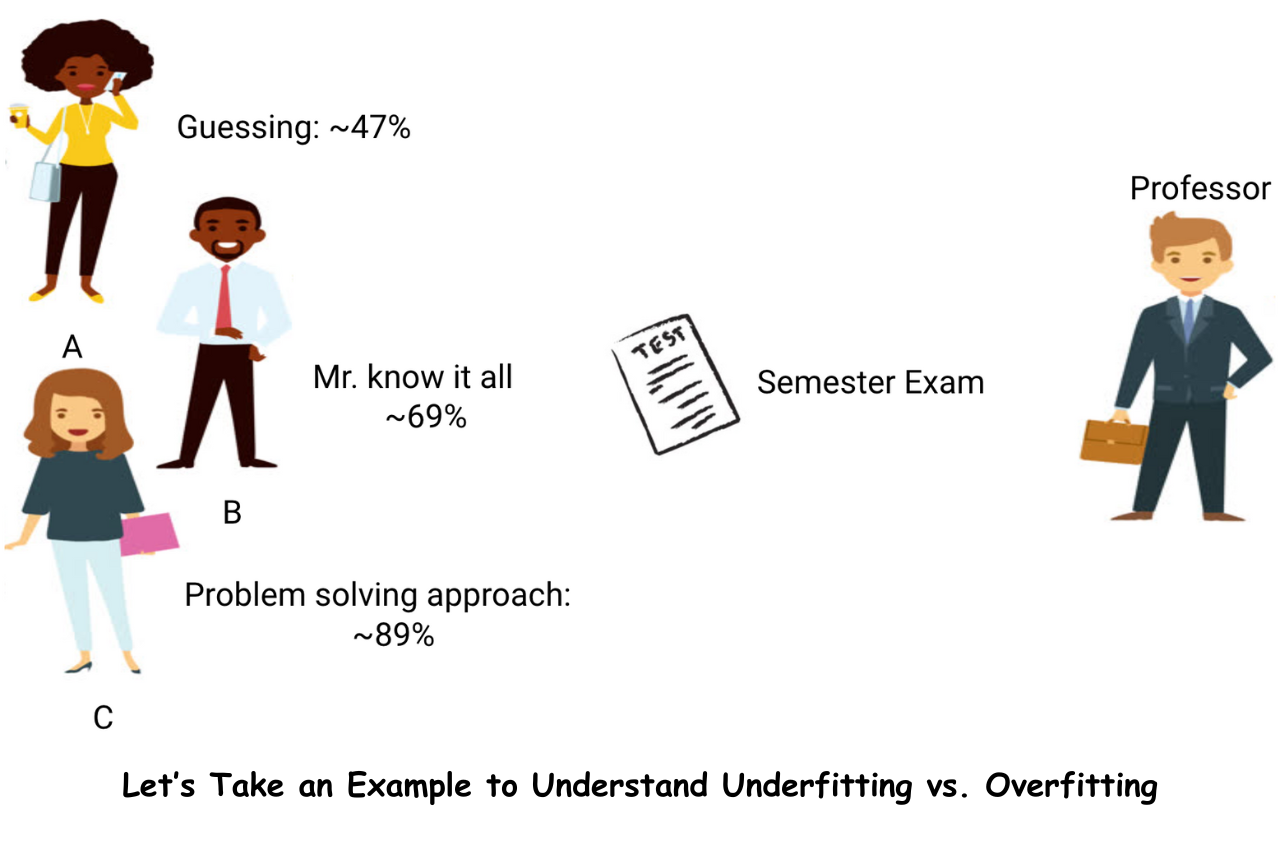

Let’s Take an Example to Understand Underfitting vs. Overfitting

I aim to illustrate these concepts through a practical, real-life scenario. While many discuss the theoretical aspect, I believe it is crucial to demonstrate the workings of underfitting and overfitting visually.

Let’s revisit our college years to delve into this topic. Imagine a mathematics course with a professor and three students.

Imagine a mathematics course with a professor and three students.

Within any educational setting, students can generally be categorized into three groups. Let’s discuss each group individually. Student A can be described as someone who lacks enthusiasm for mathematics. She exhibits little interest in the subject matter being taught in class, resulting in minimal attention towards the professor and the content being presented.

Student A can be described as someone who lacks enthusiasm for mathematics. She exhibits little interest in the subject matter being taught in class, resulting in minimal attention towards the professor and the content being presented. Let’s take a look at student B. He is an extremely competitive student who dedicates his efforts to memorizing every single question taught in class, rather than focusing on the fundamental concepts. Essentially, he lacks interest in acquiring problem-solving skills.

Let’s take a look at student B. He is an extremely competitive student who dedicates his efforts to memorizing every single question taught in class, rather than focusing on the fundamental concepts. Essentially, he lacks interest in acquiring problem-solving skills. Ultimately, we encounter the perfect student, C. Her primary focus lies in comprehending the fundamental principles and adopting a problem-solving mindset in the mathematics course, rather than simply committing the provided solutions to memory.

Ultimately, we encounter the perfect student, C. Her primary focus lies in comprehending the fundamental principles and adopting a problem-solving mindset in the mathematics course, rather than simply committing the provided solutions to memory. We are all familiar with the typical classroom scenario. The professor begins by delivering lectures and imparting knowledge to the students, teaching them about various problems and their solutions. Finally, at the end of the day, the professor administers a quiz based on the topics covered in class.

We are all familiar with the typical classroom scenario. The professor begins by delivering lectures and imparting knowledge to the students, teaching them about various problems and their solutions. Finally, at the end of the day, the professor administers a quiz based on the topics covered in class.

The real challenge arises during the semester3 tests set by the school. This is when new and unfamiliar questions (unseen data) are presented to the students. These questions have not been encountered or solved in the classroom setting. Does this sound familiar?

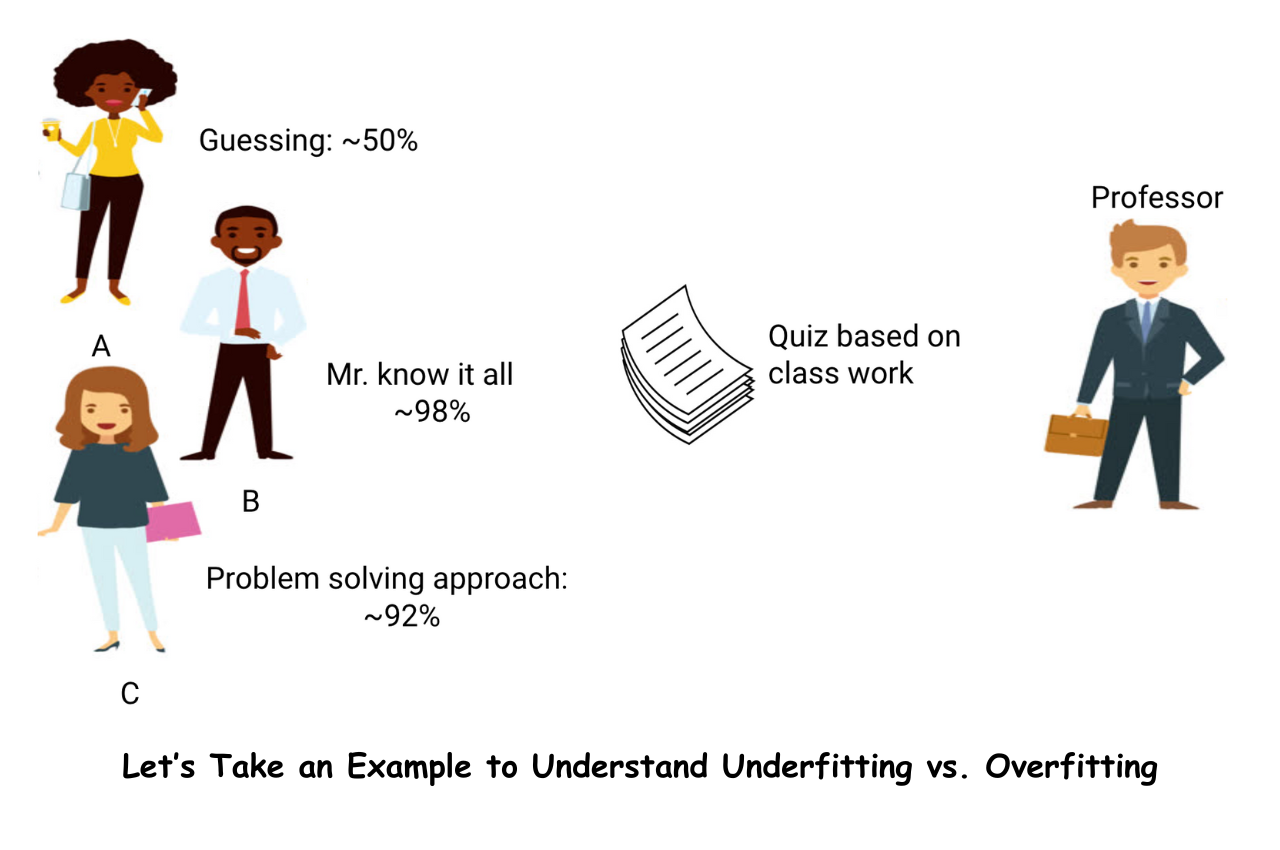

Now, let’s delve into what occurs when the teacher conducts a classroom test at the end of the day:

- Student A, lost in his own thoughts, made educated guesses and achieved a test score of around 50%.

- Conversely, the student who diligently committed every question taught in class to memory was able to recall and answer nearly every question, resulting in an impressive 98% mark on the class test.

- Student C, on the other hand, applied the problem-solving approach she had learned in the classroom to solve all the questions and achieved a commendable score of 92%.

It is evident that the student who relies solely on memorization is achieving higher scores with relative ease.

Now, let’s delve into an intriguing scenario. Let’s examine what unfolds during the monthly test, where students are confronted with unfamiliar questions that were not covered in class by the teacher.

- Student A’s performance has remained consistent, with approximately a 50% success rate in answering questions correctly.

- Student B experienced a notable decrease in score due to relying on memorization of familiar problems from class, while the monthly test presented unfamiliar questions leading to a significant drop in performance.

- Student C’s score stayed relatively stable as she concentrated on understanding the problem-solving method, enabling her to apply learned concepts to solve new and unfamiliar questions effectively.

Final thoughts

The concepts of Underfitting and Overfitting play a crucial role in the bias-variance trade-offs within machine learning. Throughout this tutorial, you have gained a fundamental understanding of Underfitting and Overfitting and the methods to prevent them.

If you have any inquiries regarding this tutorial on Underfitting and Overfitting in machine learning, please feel free to ask your questions in the comments section. We are here to assist you in finding the answers you seek.

Technocommy is a connecting space, the leading growth and networking organization for business owners and leaders. Do I qualify?

Follow me on LinkedIn. Check out my website.

Happy learning!